How to Integrate AI Tools Using Model Context Protocols

AI is no longer just a smart tool, it’s becoming the backbone of how modern businesses operate. But even the most advanced models fall short when they can’t connect smoothly with the apps, data, and workflows companies rely on. Many organizations using AI development services feel this gap every day. Their AI can think, but it can’t act. It can analyze, but it can’t access the right context when it matters.

Model Context Protocols (MCP) change that completely. MCP gives AI a direct, organized way to plug into real tools and real data without complex integrations. It turns scattered systems into a connected, high-performing AI ecosystem that actually delivers results. With MCP in place, businesses unlock faster workflows, stronger automation, and AI that works with the same clarity and confidence as their best teams.

What Is Model Context Protocol (MCP)?

- Definition: MCP is an open standard that defines how AI systems (like large language models) communicate with external data sources, APIs, or tools in a unified way.

- Why it matters: Before MCP, every integration says linking an AI with a CRM system, database or file storage required custom integrations. With multiple AI models and many tools, it becomes a huge maintenance burden (the so-called “N models × M tools” problem). MCP reduces this complexity.

- Analogy: You can think of MCP as a “universal port” (like USB-C) for AI once a tool is exposed via MCP, any compatible AI model can use it.

In short: MCP turns disjointed, brittle AI-tool integrations into a smooth, scalable, and manageable framework.

How MCP Works: Architecture & Key Components

MCP is built around a client–server design, with a few core parts:

- MCP Server: It hosts tools, data sources, APIs, or resources (e.g. databases, cloud storage, external services).

- MCP Client: Embedded within an AI-powered application or agent, it connects to the MCP Server, requests data or tool invocation.

- MCP Host / AI Application: The front-end e.g. a chatbot, a custom app, or an AI assistant that uses the client to request resources or actions.

Communication between client and server often happens through standardized protocols such as JSON-RPC. The AI can request resources, call functions, or fetch context all via MCP.

Because MCP standardizes these interactions, any new data source or tool exposed via an MCP Server becomes immediately usable by any MCP-compatible AI no need for bespoke integrations each time.

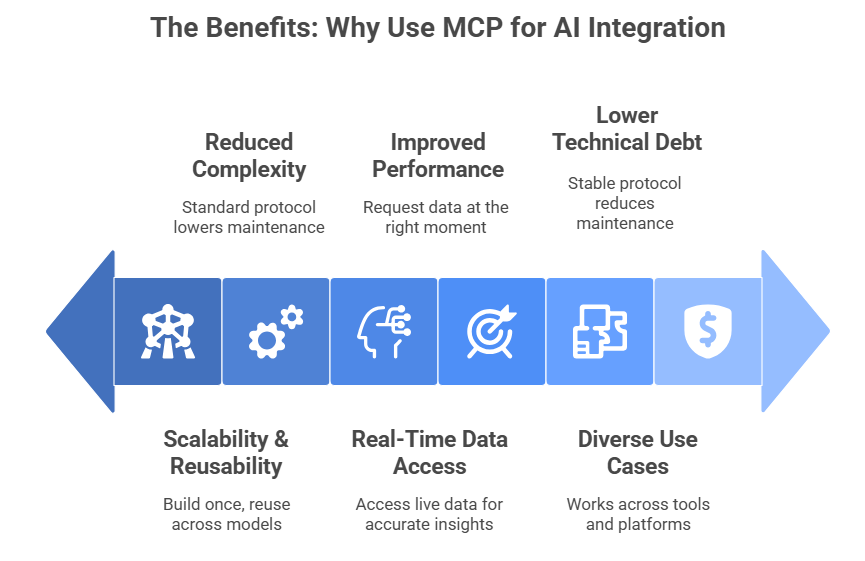

The Benefits: Why Use MCP for AI Integration

Scalability & Reusability

MCP makes AI integrations scalable by letting teams build a connection once and reuse it across different AI models. This removes the need to rewrite or duplicate integrations every time a new model is added. It also keeps systems clean as your AI stack grows. With a single integration layer, teams move faster and reduce repeated development work.

Reduced Complexity

MCP reduces the heavy complexity that normally comes with managing multiple model-tool connections. Instead of custom coding every integration, developers use a standard protocol that works for all models. This lowers maintenance work and simplifies long-term upgrades. It also minimizes errors because teams work with one predictable framework.

Real-Time, Context-Rich Data Access

MCP gives AI tools the power to access live data directly from APIs, servers, or databases. This allows models to work with real-time information and deliver more accurate insights. Fresh context improves the quality of outputs because the model responds with updated details instead of relying on old or static data.

Improved AI System Performance and Reliability

AI systems perform better when they have access to correct and current context. MCP supports this by allowing AI tools to request the right data at the right moment. This reduces hallucinations and prevents the model from making false assumptions. With more grounded responses, teams gain trust in AI-driven workflows.

Support for Diverse Use Cases

MCP is built to work across a wide variety of tools, platforms, and enterprise systems. It supports use cases in CRM, cloud storage, developer operations, analytics, automation, and more. This flexibility helps teams roll out AI solutions across different departments without creating separate integrations for each tool.

Lower Technical Debt & Faster Time-to-Market

Traditional integrations often create long-term technical debt because they require constant updates and fixes. MCP solves this by acting as a stable, standard protocol for all AI-tool communication. This reduces maintenance issues and saves development time. Teams can launch new features quickly since they start with a ready foundation.

Real-World Use Cases & Applications

Here are some of the ways MCP is being used:

- Enterprise tools integration: Linking AI assistants to internal tools like GitHub, Slack, databases, or CRMs enabling automated workflows, data retrieval, or internal analytics.

- Developer productivity tools: AI assistants accessing live code repositories, running builds, reviewing pull requests, or generating documentation with up-to-date context.

- Customer support automation: Chatbots connected to internal databases or support systems to retrieve user data, tickets, or transactions giving accurate, context-aware responses.

- Dynamic data analysis & reporting: AI agents that fetch real-time data from APIs or databases to generate reports, summaries, or insights useful for finance, operations, or marketing teams.

- Content & publishing platforms: AI tools dynamically retrieving content, metadata, or analytics data to assist in content creation, summarization, personalization or recommendations.

In short: MCP is making AI much more useful beyond simple text generation enabling context-aware, integrated, utility-driven AI solutions.

Challenges & Considerations

Adopting MCP isn’t free from caveats. Some key concerns include:

- Maturity and Ecosystem Readiness: As of now, MCP is still evolving, and not all tools/platforms fully support it.

- Security & Governance Risks: Giving AI models access to external tools and data sources expands the attack surface. Without proper permissions, access control, logging or governance, sensitive data may be exposed.

- Continued Maintenance: While MCP reduces redundant integrations, the MCP server(s) themselves need maintenance. Their configuration, monitoring, and permission management must be robust.

- Potential Performance Overhead: Depending on the protocol, tool calls, and data retrievals, MCP-based operations may add latency or complexity — especially for real-time applications. Some early academic studies even question whether integration always improves performance.

So, while MCP brings big benefits, teams must adopt it wisely balancing flexibility with security, performance, and maintainability.

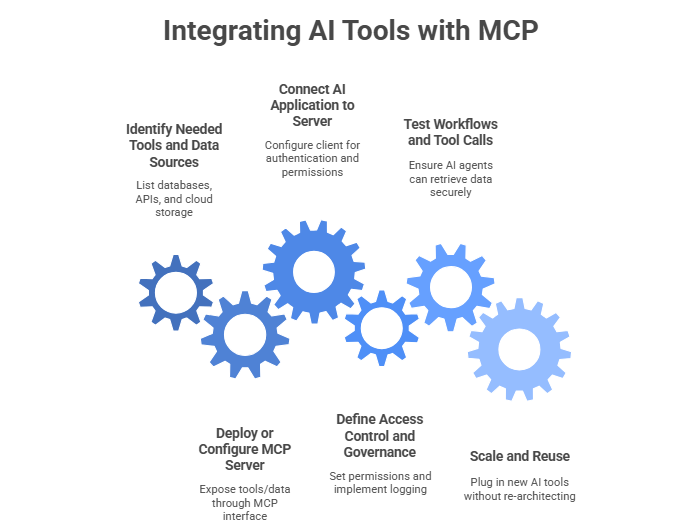

How to Start Integrating AI Tools With MCP

If you’re building an AI application and want to use MCP, here’s a simple roadmap:

- Identify needed tools and data sources: list databases, APIs, cloud storage, or third-party services your AI will access.

- Deploy or configure an MCP Server: expose the identified tools/data through MCP interface. Use existing open-source MCP server implementations or build custom ones as needed.

- Connect your AI application (MCP Host/Client) to the server: configure the client to authenticate, handle permissions, and request context or tool calls.

- Define access control and governance: set permissions to specify what the AI can access or modify. Implement logging/audit trail especially if sensitive data is involved.

- Test workflows and tool calls: ensure AI agents can retrieve data or invoke tools correctly and securely.

- Scale and reuse: once MCP integration is set up, new AI tools or services can plug in without re-architecting the system.

Following this path lets teams turn disjointed AI + tool integrations into a unified, maintainable, and scalable system.

The Road Ahead: What’s Next for MCP & AI Integration

Recent research signals growing momentum around MCP. For instance, a new benchmark study named MCP-AgentBench released in 2025 tests how real-world AI agents perform when using MCP-mediated tools across hundreds of tasks.

Still, early findings show mixed results: while MCP enables broad tool access, success rates in complex, real-world tool interactions vary depending on model, server quality, and task complexity.

This suggests that as MCP matures, developers and enterprises will need to pay careful attention to server quality, tool reliability, and security governance. But the potential is huge the next generation of AI systems could truly act as intelligent, context-aware agents across business workflows, data systems, and external services.

Wrapping Up: Why MCP Matters for Modern AI Projects

If you want to build AI solutions that go beyond simple chat or content generation solutions that interact with real data, trigger actions, and plug into enterprise systems then MCP is a game-changer.

It helps developers avoid repetitive custom integrations, reduces technical debt, and enables scalable, powerful AI tools that stay connected to real-world workflows. At the same time, it supports better AI system performance, real-time data access, and more reliable, context-aware outputs.

For any business or tech team looking to harness AI at scale, embracing MCP and working with a capable AI development services partner becomes a smart, forward-looking move.

If you’re ready to start or scale your AI integration work, App Maisters can provide expert guidance, robust implementation, and secure deployment helping you build future-ready AI solutions that harness the full potential of Model Context Protocol.

FAQs

What are Model Context Protocols in AI?

Model Context Protocols (MCP) help AI models access tools, data, and actions in a structured way. App Maisters uses MCP to build AI systems that deliver faster, more accurate results.

How do MCPs improve AI integration?

MCPs remove the need for complex custom integrations. They let AI connect with APIs, databases, and business apps through one standard method. App Maisters applies MCP to streamline AI integration for enterprises.

Why are MCPs important for AI system performance?

MCPs give AI real-time, context-rich data. This reduces errors and improves reliability. App Maisters uses this approach to boost accuracy and cut down AI hallucinations.

Can MCPs support enterprise use cases?

Yes. MCPs work with CRM platforms, cloud tools, dev systems, and internal databases. App Maisters helps enterprises adopt MCP to scale AI workflows across multiple departments.

How do MCPs reduce technical debt?

MCP replaces different integration styles with one stable standard. This cuts long-term maintenance and speeds up AI delivery. App Maisters follows this model to build cleaner and more scalable AI solutions.

Why choose App Maisters for MCP-based AI solutions?

App Maisters specializes in AI integration, MCP implementation, and custom AI development. Their expertise helps businesses build connected, efficient, and high-performing AI ecosystems.

Are MCPs compatible with AI development services?

Yes. MCPs work perfectly with custom AI development services because they support flexible tool connections and live data access. App Maisters combines both to help companies build smarter and more responsive AI systems.